This section introduces the proposed GAN-based differential privacy protection model. First, a multi-generator GAN architecture is constructed, with GA optimizing the generator cluster and gradient clipping enhancing privacy protection. Second, the privacy properties of the Differential Privacy-based Generative Adversarial Network for Healthcare Data (DP-GAN-HD) model are analyzed. Finally, the application and practical effectiveness of the model in intelligent healthcare are discussed.

GAN-based model construction

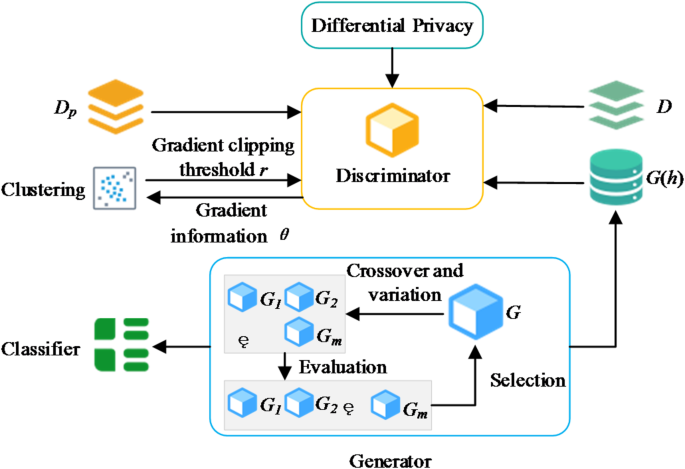

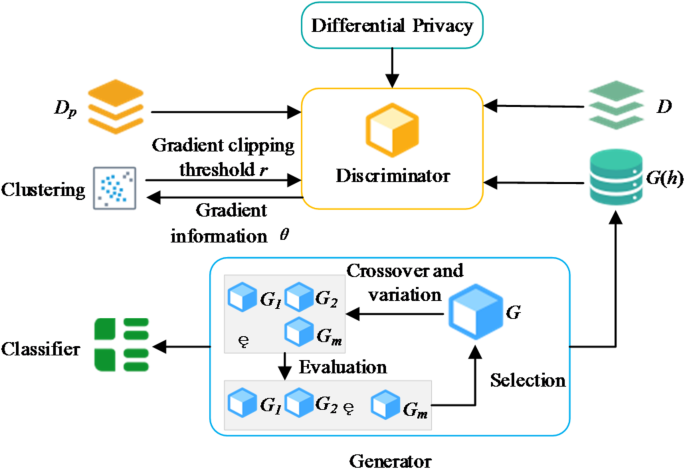

This work proposes a DP-GAN-HD model for health data protection. The proposed model consists of a discriminator module and a generator cluster module, designed to achieve privacy protection for personal health data and high-quality data generation. Figure 1 shows the architecture of the entire model.

DP-GAN-HD model structure.

(1) Discriminator training method.

In the DP-GAN-HD model, the discriminator’s training is the core step in achieving data privacy protection. The input to the discriminator consists of two parts. One stems from the real dataset D, and the other comes from the synthetic data \(\:g\left(h\right)\) generated by the generator network \(\:G\left(h\right)\), where h is a random latent vector. The goal of the discriminator is to distinguish real data from generated data by minimizing the loss function. The minimization of the loss function is expressed as:

$$\:{\mathcal{L}}_{D}={\mathbb{E}}_{x \sim D}\left[\text{log}D\left(x\right)\right]+{\mathbb{E}}_{h\sim{p}_{h}}\left[\text{log}(1-D(g\left(h\right)\left)\right)\right]$$

(1)

However, since the discriminator directly interacts with real data during training, its gradient information may inadvertently reveal sensitive privacy. To mitigate this risk, a differential privacy mechanism is incorporated into the discriminator’s training process, where Gaussian noise is injected into the gradient information to ensure privacy protection28,29. Specifically, the process involves the following steps. First, the gradient information g of the discriminator on the real samples is calculated, and the gradient is clipped according to a pre-set threshold r to limit its sensitivity. Second, noise is sampled from a Gaussian distribution \(\:N(0,{\sigma\:}^{2}{r}_{p}^{2}I)\) and added to the clipped gradient. Lastly, the discriminator parameters are updated, and the privacy budget consumption is calculated using moment statistics. This process is iterated until the privacy budget is exhausted or the maximum number of iterations is reached. However, using a fixed gradient clipping threshold r may lead to excessive gradient truncation or interference from outliers, which could negatively impact the model’s convergence and the quality of the generated data30,31.

An adaptive gradient clipping method based on clustering algorithms is proposed to address this issue. This method computes gradient information from a small set of publicly available data \(\:{D}_{p}\) and applies clustering to remove outliers, determining the average gradient norm as the clipping threshold r. During the training of the discriminator, clustering primarily functions to filter and denoise gradient information, enhancing the accuracy and dynamic adaptability of the gradient clipping process. Specifically, the clustering algorithm processes the gradient information from the small public dataset \(\:{D}_{p}\) to eliminate potential outliers or noise, ensuring a more precise clipping threshold. In each training iteration, clustering removes noise points that do not contribute to training and calculates the average gradient norm from the remaining data to determine the clipping threshold. This dynamic adaptation of the threshold to changes in data distribution helps maintain both training stability and the quality of generated data while ensuring privacy protection. When selecting a clustering algorithm, factors such as adaptability, noise-handling capability, and unsupervised learning ability are considered. Ultimately, the Density-Based Spatial Clustering of Applications with Noise (DBSCAN) algorithm is chosen for clustering. Since different datasets may exhibit diverse data distributions, DBSCAN automatically determines cluster shapes and sizes based on data density without requiring a predefined number of clusters. Compared to clustering methods that require manually specifying the number of clusters, DBSCAN is more flexible and better suited to different data characteristics. In high-dimensional data, datasets often contain outliers or noise, which DBSCAN effectively identifies and removes. This is particularly critical for discriminator training, as outliers can negatively impact gradient computation and clipping, thus affecting model performance. Additionally, DBSCAN does not rely on label information but instead leverages the intrinsic structure of the data, making it effective in scenarios without labeled data. In this work, no sensitive data is used during training; instead, the publicly available dataset \(\:{D}_{p}\) is utilized for gradient computation. Thus, the unsupervised nature of DBSCAN makes it an ideal choice.

Specifically, in each iteration, a batch of data is sampled from the public dataset \(\:{D}_{p}\), and its gradient information is computed and clustered. Using DBSCAN, noise points are automatically identified and removed based on data density characteristics, preventing them from adversely affecting gradient computation. Compared to other clustering methods, DBSCAN does not require predefining the number of clusters and can adapt to different data distributions, making it particularly effective in eliminating outliers in high-dimensional data. Therefore, DBSCAN provides a more accurate clipping threshold for gradient computation. After removing noise points through clustering, the average gradient norm C is computed as the clipping threshold:

$$\:C\approx\:\frac{1}{L}\sum\:_{i=1}^{L}{\left|\right|g\left({x}_{i}\right)\left|\right|}_{2}$$

(2)

Subsequently, the gradients of sensitive data are clipped, and noise is added using this threshold. This approach allows the clipping threshold to dynamically adapt to the data distribution characteristics, thus enhancing model performance while protecting privacy.

(2) GA-based generator cluster.

The generator’s training effectiveness directly impacts the quality of published data. However, under differential privacy constraints, its training efficiency is limited. The noise introduced by the discriminator as part of the differential privacy mechanism disrupts the feedback signal, making it challenging for the generator to fully exploit the feedback information by the discriminator. Moreover, privacy budget limitations restrict the number of training iterations, which affects the generator’s convergence and the overall quality of generated data. GAs possess unique advantages in solving highly complex and nonlinear optimization problems. First, GA can effectively handle highly nonlinear and intricate optimization tasks, making it particularly suitable for training generators and discriminators in GANs. Due to the noise introduced by differential privacy, conventional gradient descent methods may struggle to achieve effective optimization. GA’s global search capability enables it to avoid local optima, thus improving training performance. Second, GA does not rely on gradient information, allowing it to adapt to the gradient perturbations introduced by differential privacy. This makes GA’s optimization process under the privacy protection mechanism more stable. GA’s crossover and mutation mechanisms introduce diversity into the generator’s training process, improving the quality and diversity of the generated data. Moreover, GA has strong global search capabilities, enabling efficient resource allocation among multiple generators and selecting the most suitable generator for the current data distribution in the next iteration. This feature helps overcome the limitations of traditional methods. Although GA has a higher computational complexity, it facilitates collaborative optimization in multi-generator architectures, ultimately producing higher-quality synthetic data. To address this, this work proposes a GA-based dynamic selection method for multiple generators and optimizes generator performance through a simulated natural evolution process.

The core idea of this method is to treat the generator cluster as a population. Each generator represents a solution, and the generator parameters are dynamically optimized through GA’s selection, crossover, and mutation operations32,33. Specifically, the generator set \(\:\{{G}_{1},{G}_{2},\cdots\:,{G}_{m}\}\) consists of m generators. Each generator \(\:{G}_{i}\) is parameterized as \(\:{G}_{i}\sim({a}_{i},{w}_{i})\), where \(\:{a}_{i}\) refers to the network architecture and \(\:{w}_{i}\) represents the weight parameters. GA’s optimization goal is to heuristically search the generator parameter space, enabling the generators to capture the distribution features of real data.

During the evaluation phase, each generator \(\:{G}_{i}\) in the generator cluster is assessed using a fitness function \(\:F\left({G}_{i}\right)\). The fitness function is defined as:

$$\:F\left({G}_{i}\right)={V}_{w}({G}_{i}\left(h\right);{w}_{i})$$

(3)

\(\:{V}_{w}\) is the validation function used to assess the quality of the generated data \(\:{G}_{i}\left(h\right)\). The validation function is implemented using a classifier Q trained on an external validation set, with the input being the generated data and the output being the classification accuracy. The training of classifier Q is based on publicly available data, without involving sensitive information, thus not introducing privacy risks. The generator’s performance is quantified through the validation function, providing a basis for subsequent selection.

In the selection phase, the generator cluster is ranked based on fitness scores, and the generators with higher scores are retained for the next round of iteration. The selection process can be formally represented as:

$$\:\{{F}_{{G}_{1}},{F}_{{G}_{2}},\cdots\:,{F}_{{G}_{m}}\}\leftarrow\:sort\left(\right\{F\left({G}_{i}\right)\left\}\right)$$

(4)

Generators with lower scores are eliminated, while those with higher scores are selected as parents to participate in subsequent crossover and mutation operations.

In the crossover phase, selected parent generators \(\:{G}_{a}\) and \(\:{G}_{b}\) produce new offspring generators through parameter recombination. Specifically, the generator parameters \(\:{\theta\:}_{a}\) and \(\:{\theta\:}_{b}\) are expanded into one-dimensional vectors, and the crossover recombination is performed using a random split point, producing offspring \(\:{G}_{c}\) and \(\:{G}_{d}\). In the mutation phase, Gaussian noise \(\:N(0,{\lambda\:}^{2})\) is added to the generator parameters at random points, where \(\:\lambda\:\) is the noise amplitude hyperparameter. Through the crossover and mutation operations, the generator cluster can explore a broader parameter space, enhancing the quality and diversity of the generated data.

Privacy analysis of the DP-GAN-HD model

The privacy analysis aims to evaluate the ability of the DP-GAN-HD model to protect the privacy of personal health data based on the definition of differential privacy. The effectiveness of the model’s privacy protection is analyzed within the differential privacy-based framework, ensuring that no sensitive information is disclosed during the data generation process. The core of differential privacy lies in quantifying privacy loss to control the risk of data leakage. For two neighboring datasets D and D’, and the output \(\:o\) of a random function M, the privacy loss is defined as:

$$\:c\left(o;D,{D}^{{\prime\:}}\right)=\text{ln}\frac{\text{P}\text{r}[M\left(D\right)=o]}{\text{P}\text{r}[M\left(D{\prime\:}\right)=o]}$$

(5)

D and D’ satisfy:

$$\:\left|\right|D-D^{\prime\:}\left|\right|\le\:1$$

(6)

This metric reflects the difference in the model’s outputs between two adjacent datasets, thus helping to quantify the risk of privacy leakage.

The privacy protection of the DP-GAN-HD model is achieved through two main stages: training and data generation. During training, only the discriminator directly interacts with the original sensitive dataset D, while the generator is trained solely based on feedback from the discriminator. To ensure privacy, Gaussian noise perturbation \(\:N(0,{\sigma\:}^{2}{c}_{p}^{2}I)\) is introduced into the discriminator’s training process, where \(\:\sigma\:=\frac{\sqrt{2\text{log}\left(\frac{1.25}{\delta\:}\right)}}{\epsilon}\). Gradient clipping ensures that each iteration update complies with differential privacy requirements, with the noise standard deviation controlled by the privacy budget \(\:\epsilon\) and \(\:\delta\:\). In each iteration, through gradient clipping and noise perturbation, the DP-GAN-HD model adheres to \(\:\left(\epsilon,\delta\:\right)\)– differential privacy constraints. This ensures that no sensitive information is leaked during training. Specifically, the model quantifies the privacy loss for each iteration and accumulates losses over multiple iterations to maintain privacy protection. During each iteration, the batch sampling probability is defined as \(\:p=\frac{m}{n}\) (where m is the batch size and n is the dataset size), leading to a gradual consumption of the model’s privacy budget. After T training epochs, the model satisfies \(\:(O\left(q\epsilon\sqrt{T}\right),\delta\:)\)– differential privacy as a whole.

During the data generation phase, the generator receives differentially private feedback from the discriminator without directly accessing the original data. According to the post-processing property of differential privacy, the data generated by the generator does not introduce additional privacy loss and, therefore, does not consume extra privacy budget. This ensures that the data generated during this phase does not increase the risk of privacy leakage, thus maintaining the continuity of privacy protection.

Based on the above analysis, the DP-GAN-HD model satisfies differential privacy requirements during the training and generation phases. By applying gradient clipping and Gaussian noise perturbation during training, the model ensures privacy security. At the same time, the post-processing property prevents further privacy leakage in the generation phase. As a result, the DP-GAN-HD model can generate high-quality health data while preserving privacy, effectively balancing the trade-off between privacy protection and data utility.

Application of the DP-GAN-HD model in intelligent healthcare

The DP-GAN-HD model has broad application potential in intelligent healthcare. Its core advantage lies in its ability to generate high-quality synthetic data while protecting the privacy of personal health data, thus supporting medical research and practice. The DP-GAN-HD model can be applied in the following scenarios within intelligent healthcare:

-

(1)

Disease Prediction and Diagnosis. The DP-GAN-HD model can generate synthetic data that closely resembles the distribution of real health data, which can be employed to train disease prediction models. For instance, in the early prediction of chronic diseases such as diabetes and cardiovascular diseases, the generated data can compensate for the shortage of real data while avoiding privacy risks. By combining ML algorithms, the generated data can enhance the accuracy and reliability of disease diagnosis.

-

(2)

Personalized Treatment Plan Design. According to the generated high-quality data, the DP-GAN-HD model can support the development of personalized treatment plans. For example, in cancer treatment, the generated patient data can simulate the effects of different treatment regimens, helping doctors select the most optimal treatment strategy. Moreover, the generated data can be used for drug response prediction, offering data support for precision medicine.

-

(3)

Medical Data Sharing and Collaboration. Privacy protection is a critical challenge in cross-institutional medical data sharing. The synthetic data generated by the DP-GAN-HD model can facilitate data sharing and collaboration between healthcare institutions without disclosing patient privacy. For example, the generated data can be used in multi-center clinical studies, enhancing the statistical power and generalizability of research outcomes.

-

(4)

Medical Data Augmentation and Imbalance Problem Resolution.

In medical datasets, some categories of data may be scarce, leading to class imbalance issues in model training. The DP-GAN-HD model can effectively address this problem by generating data for underrepresented categories, thus improving the performance of ML models.

In summary, applying the DP-GAN-HD model in intelligent healthcare protects patient privacy. Also, it provides high-quality data support for medical research, clinical diagnosis, and treatment plan design, driving the development of AI in healthcare.

link